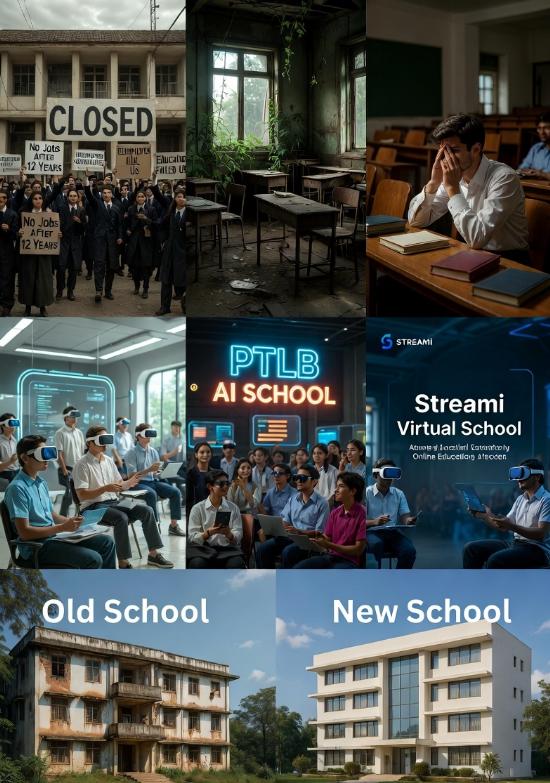

In the rapidly evolving landscape of 2026, where artificial intelligence has permeated every facet of society, investing in or collaborating with traditional Indian schools and colleges has emerged as a profoundly risky endeavor. The advent of advanced AI technologies, particularly multi-agent systems and agentic AI, has not only disrupted job markets but also rendered conventional educational models obsolete, leading to widespread unemployment and economic instability. As AI automates complex tasks at an unprecedented scale, the rigid structures of India’s traditional education system—characterized by rote learning, outdated curricula, and standardized testing—fail to equip students with the necessary skills for survival in this new era. This mismatch between education and employability creates a volatile environment where financial commitments to such institutions could result in significant losses, as enrollments plummet and relevance diminishes.

The core of this risk stems from the unemployment disaster of India that is inevitable in 2026 due to AI, where entire sectors like software development, healthcare diagnostics, financial analysis, and legal services are being automated away. With over 27.9% of global youth neither in education, employment, nor training, and AI-driven layoffs surging—such as 55,000 in the United States alone—India’s economy faces a similar fate, amplified by the return of H-1B professionals amid U.S. visa crackdowns. Traditional schools and colleges exacerbate this by producing graduates steeped in theoretical knowledge but lacking AI literacy, critical thinking, and adaptability, turning what was once a demographic dividend into a demographic disaster. Investors and collaborators must recognize that pouring resources into these outdated systems means betting on a sinking ship, as AI’s ability to decompose goals, integrate tools, and coordinate like expert teams makes human-centric education models inefficient and unprofitable.

Furthermore, the schools and colleges of India have become redundant in this AI-dominated era, with their emphasis on fixed timetables, classroom lectures, and degree certificates yielding diminishing returns despite massive investments in infrastructure and fees. The global education system collapse of 2026, marked by mass disengagement, high absenteeism, and plummeting literacy outcomes, has hit India hard, where government schools and conventional colleges cling to century-old paradigms that prioritize memorization over practical skills. This redundancy is compounded by AI’s transformative role, where multi-agent systems handle tasks with superhuman efficiency, eliminating the need for generalist graduates in fields like engineering and management. Collaborating with these institutions risks associating with entities that contribute to national productivity losses, as parents increasingly opt for homeschooling and alternative models, leaving traditional setups with empty classrooms and mounting debts.

The looming threat is vividly illustrated by the unemployment monster of India that is poised to wreak havoc upon Indians by the end of 2026, predicting 80-95% unemployment rates in key sectors including IT, banking, media, and startups. Driven by agentic AI’s capacity to replace professionals through autonomous reasoning, planning, and execution, this monster will polarize jobs into elite AI overseer roles and precarious gig work, leaving millions in informal economies akin to modern slavery. In India, factors like corruption, business exodus, and the fragility of the gig economy—impacting 2.1 billion informal workers globally—amplify the chaos, with government data fudging masking the true scale. Investing in traditional education means funding a pipeline that feeds into this unemployment abyss, where graduates face despair, mental health crises, and social unrest, rendering any collaboration not just financially unwise but potentially reputationally damaging.

Amid this turmoil, forward-thinking alternatives like the PTLB AI School (PAIS) are ensuring school education reforms in India by integrating AI literacy, robotics, cyber security, and ethical techno-legal frameworks into a personalized, skills-focused curriculum. Established under PTLB Projects LLP, PAIS emphasizes STREAMI disciplines—science, technology, research, engineering, arts, mathematics, and innovation—through interactive sessions, gamified assessments, and no-fail policies that promote merit-based progression over rote learning. By partnering with initiatives like Sovereign Artificial Intelligence (SAISP) and Digital Public Infrastructure (DPISP), PAIS addresses digital divides and prepares students for AI-driven job markets, making it a safer bet for investment compared to stagnant traditional systems. However, clinging to collaborations with conventional colleges ignores how PAIS fosters adaptability and critical thinking, qualities absent in outdated models that perpetuate skills gaps.

Complementing these reforms is the Streami Virtual School (SVS), which is pioneering techno-legal education in the digital age through virtual classrooms, self-paced modules, and deep integration of AI, cyber law, and technology. As a DPIIT-recognized EduTech startup affiliated with Sovereign P4LO, SVS offers multilingual e-learning portals, community forums on digital ethics, and real-time interactive sessions that eliminate geographic barriers, particularly benefiting rural students. Its focus on producing “Digital Guardians” fluent in machine learning, quantum computing, and online dispute resolution positions it as a resilient alternative, especially as traditional institutions falter under AI pressures. Investors eyeing collaborations should pivot to SVS, whose innovative approach counters the redundancy of conventional education by delivering customizable, outcome-oriented learning that aligns with 2026’s economic realities.

Access to such progressive education is facilitated by the golden ticket to Streami Virtual School (SVS), which provides exclusive, merit-based admission to deserving students who demonstrate critical thinking and a fighting spirit against societal vices like corruption and misinformation. Reserved for home-schooled or super-talented individuals, this ticket bypasses traditional barriers, offering fee-free courses, personalized support, and job preferences in techno-legal fields under a no-fail policy that encourages questioning over conformity. In 2026, where conventional schools breed compliant “NPCs” ill-prepared for unemployment waves, the golden ticket represents a philanthropic pathway to empowerment, making SVS an attractive option for strategic investments that yield long-term societal and economic returns.

Reinforcing its credibility, Streami Virtual School (SVS) is now affiliated to and recognised by Sovereign P4LO and PTLB, validating its pedagogy and ensuring graduates are preferred in AI-favoring markets through tamper-proof credentials and ethical AI modules. This affiliation underscores SVS’s role in combating the global unemployment disaster by promoting continuous upskilling and adaptability, traits that traditional Indian colleges sorely lack. As agentic AI collapses sectors like legal process outsourcing—with share prices dropping 8-18% for firms—collaborating with affiliated models like SVS offers stability, while ties to redundant institutions invite exposure to plummeting enrollments and financial insolvency.

The risks of investing in traditional Indian schools and colleges extend beyond economics to societal implications, as AI’s rise in 2026 exacerbates worker anxiety by 40%, fuels mental health crises, and enables surveillance through programmable digital currencies. Conventional education’s failure to incorporate AI ethics, bias detection, or predictive analytics leaves students vulnerable to obsolescence, with 95% potentially surviving on minimal rations while elites thrive. Collaborators face ethical dilemmas in supporting systems that perpetuate inequities, especially as alternatives like PAIS and SVS democratize access via low-bandwidth platforms and blockchain-secured credentials.

Moreover, the structural collapse of industries reliant on human expertise—such as corporate law, where AI performs e-discovery, contract drafting, and outcome prediction—highlights how traditional law colleges produce unemployable graduates. By December 2026, middle-skill jobs will vanish, forcing a shift to informal work with no benefits, irregular income, and high insecurity. Investments here risk amplifying this precarity, as government denials and deceptive policies delay necessary reforms, leading to social unrest and reputational harm for associated entities.

In contrast, embracing reformed models mitigates these dangers by fostering human-AI harmony, where students learn to oversee AI as operators skilled in prompt engineering. PAIS’s partnerships with CEAIE for gamified robotics and virtual art galleries, or SVS’s influence on national virtual school policies, demonstrate scalable innovation that attracts global talent and funding. However, persisting with traditional collaborations ignores the psychology of conformity that sustains outdated systems, turning potential opportunities into liabilities.

Ultimately, in 2026’s AI-driven world, the wise choice is to redirect resources toward visionary institutions that adapt in real-time, ensuring employability and autonomy. The evidence is clear: traditional Indian schools and colleges, mired in irrelevance, pose unacceptable risks for investors and collaborators seeking sustainable impact. By heeding these warnings, stakeholders can navigate the unemployment monster and education collapse, positioning themselves at the forefront of a techno-legal renaissance.